Product Framework: Model Fallback and AI Pricing Strategy for better decision-making

Bruna Gomes | Mar 25, 2026

Listen to this article

Have you ever stopped to think about how messy real life can be? We don’t live in perfectly divided time boxes. Sometimes your smartwatch reads your heart rate every second during a heavy gym session, but then goes hours without registering anything because you took it off to charge — or you’re completely still, deep in focused work.

Traditional Artificial Intelligence hates that. It thrives on fixed time windows. When data is missing, it simply fills the gaps with zeros, destroying any organic sense of cause and effect. And worse: it ignores temporal context entirely.

Picture this: your smartwatch reads 160 bpm. Is that good or bad? A classic medical classification AI might trigger an anomaly alert. But what if it’s 6 p.m. and you just finished a run?

Pure endorphins, everything’s fine. Now, what if it’s 7 a.m. and you just woke up in a panic remembering an urgent work deadline or an upcoming exam? Same metric reading, completely opposite contexts. That’s exactly where Liquid Neural Networks (LNNs) come in.

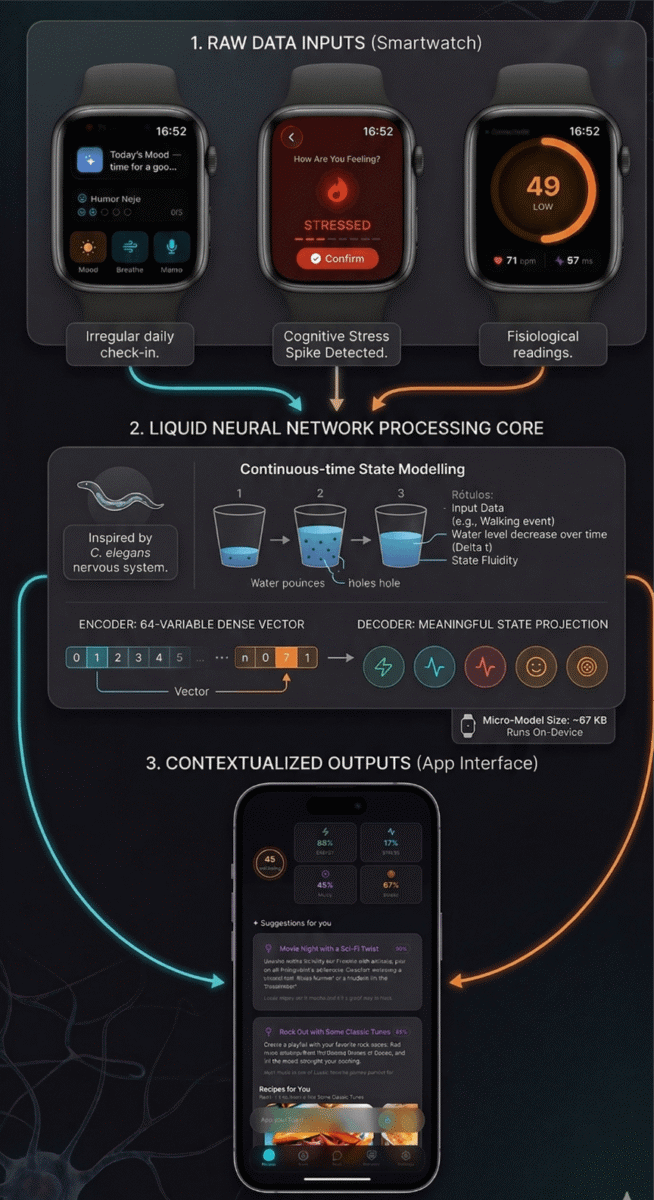

Developed by MIT in 2021, the LNN was inspired by something that might seem strange: the nervous system of the C. elegans worm. This microscopic creature has only 302 neurons, yet demonstrates remarkably fast protective instincts and reflexes — fleeing threats, adapting to wind gusts and water currents, feeding, and reproducing. For reference, the human brain has 86 billion neurons.

What makes LNNs fundamentally different is that they model time as a continuous variable. Rather than looking at rigid data blocks, the mathematical equations governing the neurons adapt constantly as time (Δt) passes and new stimuli arrive. The model’s internal state flows alongside incoming data — shifting and reshaping, just like a liquid conforming to whatever container it’s poured into.

Think of an LNN as a set of water buckets, each with a small hole in the bottom. When new data arrives it fills one of those buckets, and the water level represents that information. But because of the hole, the water level gradually drops over time.

That doesn’t mean the information disappears entirely — it just means its impact on the present moment has diminished. If you do yoga at 7 a.m., the bucket is full (high endorphins). By 6 p.m., only a little water remains at the bottom — enough for the system to know you had an active morning, but not enough to dictate how you feel at that exact moment.

And the best part? While massive Large Language Models (LLMs) require billion-dollar data centers and hundreds of gigabytes to run, an LNN can weigh as little as 67 KB. It can live directly on a smartwatch, processing everything without breaking a sweat on the processor.

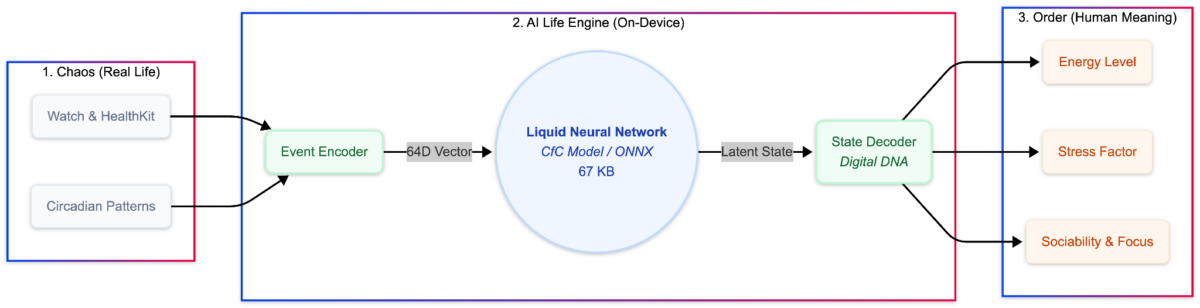

Don’t expect an LNN to write poetry or generate artwork — leave that to the LLMs. In practice, the LNN acts as a digital nerve center capable of interpreting the chaotic flow of everyday life instantly and invisibly. The architecture runs in three steps:

Everything starts at the wrist, capturing both raw physiological signals like heart rate and HRV (heart rate variability), and rapid emotional check-ins that flag stress spikes. That stream of irregular data flows down into the “liquid processing core” (the LNN).

Running directly on-device at just 67 KB, it uses the continuous-time model (the leaky buckets) to absorb each input, update all 64 dimensions of the vector in the Encoder, and pass the result to the Decoder.

The final output surfaces in the mobile app: instead of cold graphs, the app delivers fully contextualized readings (Energy, Stress, Focus) that unlock the system’s real value — recommending a relaxing classic rock playlist or a Sci-Fi film at exactly the moment you need it, closing the loop.

Read more: Your AI Strategy Has a Data Problem

Compressing your life into 64 numbers is cool, but what do we do with that? We use a Decoder: a final layer that projects the abstract vector into metrics you actually understand — Energy, Focus, Stress, or Sociability. This is where numbers become feeling.

Imagine each position in that 64-dimensional vector as a coordinate in your digital brain’s “state cloud.” If the value responsible for mapping your physical activity level sits at 0.42323, the system understands you’re at rest.

When your smartwatch detects the start of a brisk walk, the LNN processes that new “drop” of information and lets that value flow organically toward 0.78544. Because the network perceives that this shift coincided with a sustained rise in the step sensor, the Decoder translates it as a real increase in Energy.

In our LNN use case, we correlate multiple contexts. For example, if you activate Do Not Disturb or enter a long meeting in your calendar, another coordinate in the vector might gradually jump from 0.21000 (distracted state) to 0.89012.

The Decoder reads that and sets your Focus as high. Similarly, if the system detects a sudden heart rate spike while the movement sensor reads zero, you’re just sitting, a tension coordinate shifts toward 0.65000. The Decoder recognizes the absence of physical activity and concludes: “This isn’t aerobic exercise — this is a cognitive Stress spike.” It’s from this continuous dance of variables that the model’s genuine human-context awareness is born.

# 1. Raw sensor data (e.g., Apple Health via Watch)

raw_event = {

"type": "exercise",

"exercise_type": "walking",

"duration_minutes": 20,

"intensity": 0.65, # Brisk walk / aerobic pace

"calories_burned": 115,

"average_hr_zone": 2, # Fat-burning zone

"timestamp": "2026-03-31T08:30:00Z"

}

# 2. Initialize Encoder and Decoder

encoder = EventEncoder(output_dim=64)

decoder = StateDecoder(hidden_dim=64)

# 3. "Processing": Transform into dense vector

vector = encoder.encode(raw_event)

# 4. Translation: Back to the real world

metrics = decoder.decode(vector)

print(f"Current State: Energy: {metrics['energy']:.1f}% | Stress: {metrics['stress']:.1f}%")

# Output: Current State: Energy: 78.5% | Stress: 12.0%

Code language: PHP (php)Raw event: 20-min walk, intensity 0.65, 115 calories. Process: The Encoder generates the dense vector and the Decoder interprets it. Result: Current State: Energy: 78.5% | Stress: 12.0%

The secret lies in Rhythms. The LNN doesn’t just look at what you did right now. It understands circadian rhythms. It knows that 100 bpm at 3 a.m. is a warning sign, but at 3 p.m. it might just be the coffee kicking in. Because the model is so tiny, all of this happens on-device. Your data doesn’t need to travel to the cloud — it stays with you.

Read more: What We Learned Exploring AI Prototyping Tools

When data is missing, traditional models panic. To solve this, we use algorithmic humility. The system calculates a Confidence Index. If you went the whole weekend without your watch, the network acknowledges that uncertainty has grown:

# The Decoder examines recent vector stability

metrics = decoder.decode_with_confidence(hidden_vector, history=recent_states)

current_energy, confidence = metrics["energy"]

print(f"Energy: {current_energy:.1f}% | AI Confidence: {confidence:.0%}")

# Output: Energy: 60.0% | AI Confidence: 35% (Insufficient recent temporal data)Code language: PHP (php)Example: Energy: 60.0% | AI Confidence: 35% (Insufficient recent temporal data).

Another technique is Drift calculation. We measure the exact mathematical distance between the pre-event and post-event states. If a 15-minute meeting causes a major rupture in your rhythmic pattern, the system detects that your inertia has been broken:

# Advance the network with the newly arrived input

new_state = lnn.forward(event_vector, current_state, time_delta)

# How deep was the mathematical disruption?

drift = np.linalg.norm(new_state - current_state)

if drift > 1.2:

print("Alert: That 15-minute meeting completely broke your daily rhythmic inertia!")

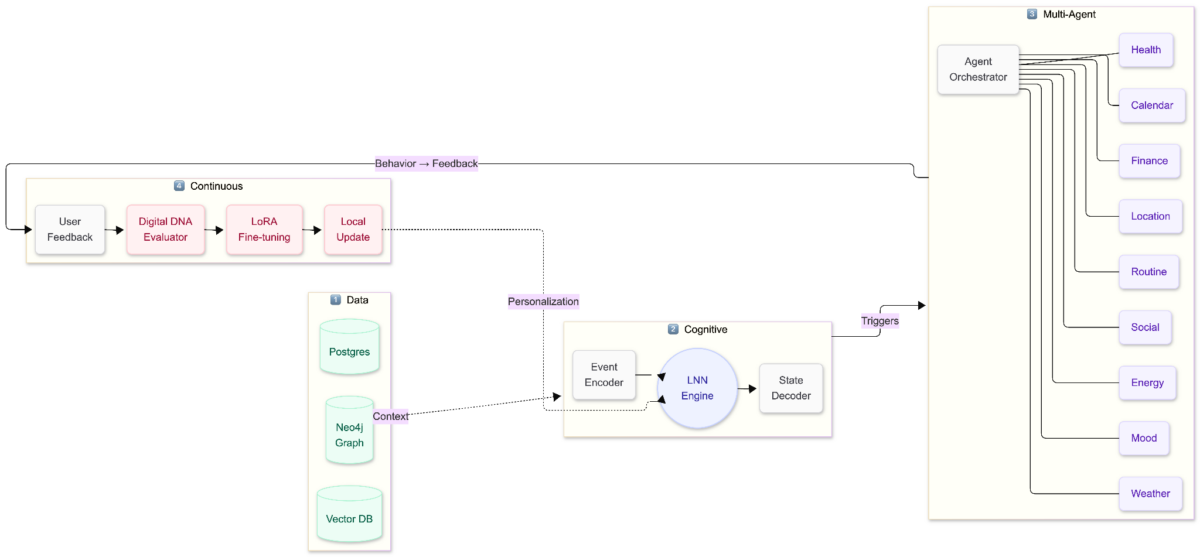

Code language: PHP (php)The LNN is the heart, but it doesn’t operate alone. It connects the efficiency of the liquid model to an ecosystem of specialized agents. While the liquid network senses your real-time state, calendar, health, and routine agents observe long-term patterns to continuously update your Digital DNA.

Mapping latent states is the foundation for Hyper-Contextual Commerce. Companies stop selling products and start delivering solutions at exactly the right moment:

Unlike a standard AI that ships frozen, the LNN functions like a Digital DNA. If it suggests you’re stressed and you correct it, saying you’re actually excited, it learns from that feedback.

This intelligence is universal. With just a few kilobytes, it can live in your phone, your watch, or your car. Over time, the network molds itself to your unique patterns. We move from reactive AI to proactive AI. Technology is finally adapting to us and not the other way around.

An LNN is a type of neural network developed by MIT in 2021, inspired by the nervous system of the C. elegans worm, which has only 302 neurons. Unlike traditional AI, LNNs model time as a continuous variable, allowing the mathematical equations governing the neurons to adapt constantly as time passes and new stimuli arrive.

Traditional AI relies on fixed time windows and fills missing data with zeros, destroying cause-and-effect relationships and ignoring temporal context. LNNs instead treat time as continuous, functioning like water buckets with holes: new data fills the bucket, and its impact gradually diminishes over time without disappearing entirely. When data is missing, LNNs use a Confidence Index to acknowledge uncertainty rather than panicking.

The architecture runs in three steps: (1) Sensors listen, capturing raw organic inputs from wearables like steps, sleep, and calories; (2) The Encoder processes this data into a vector of 64 numerical variables; (3) The Decoder translates that mathematical output back into real-world metrics like Energy, Stress, or Focus.

LNNs can weigh as little as 67 KB, compared to Large Language Models (LLMs) which require billion-dollar data centers and hundreds of gigabytes. This small size allows LNNs to live directly on a smartwatch or phone, processing data on-device so it doesn't need to travel to the cloud.

Mapping latent states enables companies to deliver solutions at the right moment. Examples include: retail and food suggesting quick, practical meals when energy is low after cognitive stress; behavioral finance pausing volatile market notifications during stress peaks to prevent impulsive decisions; and entertainment recommending focus-heavy content when concentration is high or social content when the user is primed to engage.