Product Framework: Model Fallback and AI Pricing Strategy for better decision-making

Bruna Gomes | Mar 25, 2026

Conversational AI has moved far beyond rule-based chatbots and scripted customer support flows. As a subset of Artificial Intelligence (AI), large language models (LLMs) in conversational interfaces are becoming a core interaction layer in digital products, powering customer support, internal tools, onboarding, search, and even decision-making workflows.

Yet, despite the rapid adoption of conversational AI, many initiatives fail to deliver real business value. In most cases, the issue is not model quality but a lack of structure connecting product strategy, user experience, and engineering execution.

We’ll see how to architect and operate conversational AI features that are scalable, clarify how they differ from generative AI, explore leading tools, and outline how to build scalable conversational AI solutions aligned with real business outcomes.

What is conversational AI, and why does it matter for digital products?

Conversational AI refers to systems that enable users to interact with software using natural language, through text or voice, powered by technologies such as:

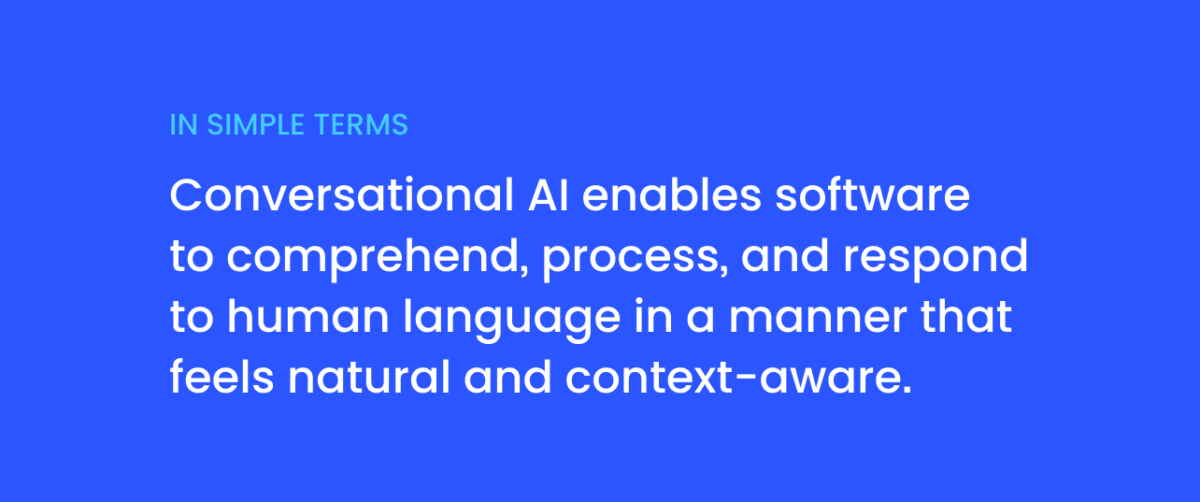

In simple terms:

Unlike traditional interfaces, conversational AI reduces friction by allowing users to express goals in human terms instead of navigating rigid UI flows. When designed correctly, it can:

For product teams, conversational AI is no longer an experimental feature; it has become a standard tool, and it is increasingly becoming a strategic capability.

Read more: AI & Machine Learning Glossary: Key Terms for Modern Businesses

Yes. ChatGPT is a conversational AI system built on top of generative large language models developed by OpenAI. However, there’s an important nuance:

Enterprise-grade conversational AI solutions often combine:

In other words, ChatGPT is one example of a conversational AI assistant, but scalable product implementations require much more than a single API call.

Early chatbots focused primarily on automation through predefined rules and keyword matching. Modern conversational AI shifts the focus toward intent understanding and goal completion, enabling systems to adapt their responses to user context.

This evolution allows organizations to move from static Q&A interactions to dynamic, outcome-driven conversations — where success is measured by resolution quality, efficiency, and user satisfaction, not just response accuracy.

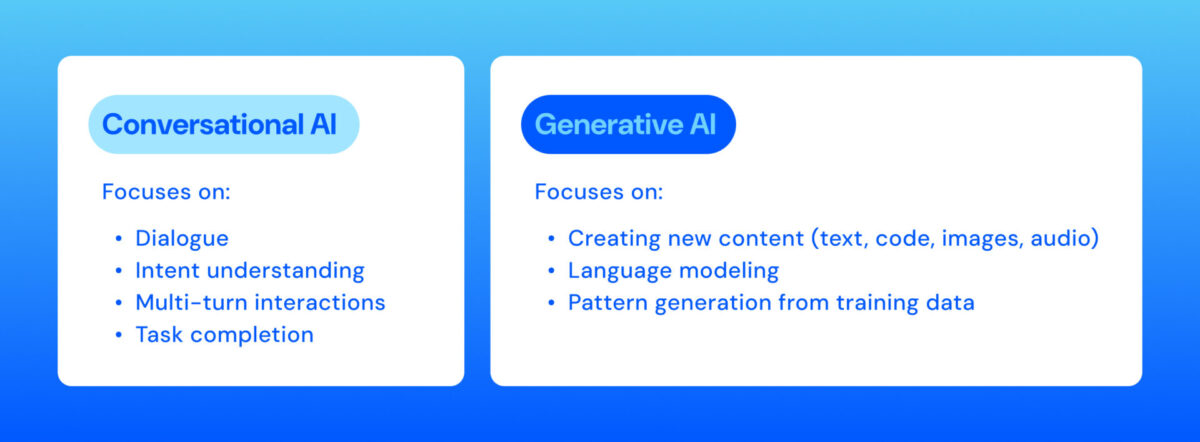

This is one of the most common questions in the market.

Think of it this way:

A conversational AI assistant may use generative AI internally, but it also includes memory management, orchestration, tool usage, and governance mechanisms. For enterprise applications, confusing these two concepts often leads to over-scoped initiatives or under-architected implementations.

One of the most important strategic decisions when designing conversational AI is determining whether AI is the core of the product or an enhancement to an existing experience.

AI-native products rely entirely on AI to deliver their value proposition. Without the AI layer, the product cannot function as intended. While this approach enables strong differentiation, it also introduces higher risk. Model failures, hallucinations, or performance degradation directly impact the product’s usability and credibility.

AI-native products require robust governance, continuous monitoring, and human-in-the-loop mechanisms from day one.

AI-enhanced products use AI to improve usability, personalization, or efficiency within an already functional system. In this case, AI delivers incremental value while the underlying product remains usable without it.

This approach typically involves lower risk and allows teams to iterate gradually, validating impact before expanding AI capabilities.

Read more: AI Use Cases & Applications: How Businesses Are Leveraging AI

A common failure pattern in conversational AI projects is starting with the solution instead of the problem. Adding a chat interface simply because it is trendy rarely leads to meaningful outcomes.

Without a clear understanding of user pain points and success metrics, conversational AI becomes an expensive experiment rather than a product capability.

Effective conversational AI design begins with clearly defined objectives, such as:

Every conversational feature should map directly to measurable business or user outcomes.

Trust is a critical factor in conversational UX. Users must understand what the system can do, why it responds a certain way, and how much control they have.

From the first interaction, users should have a clear mental model of the system’s capabilities and limitations. Poor expectation management often leads to frustration and a sense of failure, even when the system behaves correctly.

Whenever possible, conversational AI systems should provide explanations, references, or contextual cues that reduce the perception of a “black box.” Transparency increases user confidence and helps mitigate errors.

Mechanisms such as thumbs up/down, response comparison, or corrective feedback allow users to guide the system and provide valuable signals for monitoring and improvement.

Failures in AI systems are inevitable. What matters is how they are handled.

Conversational AI should fail gracefully by:

A silent or broken interaction erodes trust far more than an acknowledged limitation.

Keeping humans in the loop, especially for high-impact decisions, allows organizations to detect issues early, correct model behavior, and maintain accountability.

Scalable conversational AI systems treat LLMs as components within a broader architecture, not as monolithic decision engines.

An orchestration layer manages user intent, selects prompts, coordinates multiple model calls, and decides when external tools or workflows are required.

Complex conversations often involve several model interactions, such as intent classification, task decomposition, and response generation.

Context management is essential for meaningful conversations. This includes:

Ineffective context management can lead to information overload, which can be just as damaging to model performance as insufficient context.

Guardrails validate inputs and outputs before and after model execution. They help prevent policy violations, data leakage, invalid formats, and unsafe responses, ensuring compliance and reliability.

Read more: How to Integrate AI Into an App

LLMs have limited context windows. Sending excessive information increases cost and reduces decision quality. Effective systems use summarization, sliding windows, and structured memory to optimize token usage.

As conversational systems become more general-purpose, deciding which contexts truly matter becomes increasingly complex. Highly specialized systems are easier to optimize because their scope is well-defined.

RAG enables conversational AI systems to retrieve relevant internal documents and use them as a grounding context for responses. This significantly reduces hallucinations and improves accuracy.

Unlike fine-tuning, RAG allows teams to update information continuously. It also enables source attribution, increasing trust and auditability — especially in regulated environments.

Read more: Fine-Tuning vs. RAG: Choosing the Best Approach for Your AI Model

Operating conversational AI requires monitoring metrics beyond traditional software KPIs, including:

Techniques such as LLM-as-a-judge, semantic similarity analysis, and user feedback help identify hallucinations and performance degradation over time.

Because deterministic assertions do not work well for natural language, quality evaluation often relies on probabilistic and comparative methods, supported by human and AI reviewers.

Successful conversational AI systems are not defined by model choice alone. They are built on a clear product strategy, thoughtful UX design, robust architecture, and strong governance.

Whether AI is the core of the product or an enhancement, teams that invest in structure — from prompt design and context engineering to guardrails and monitoring — are far more likely to deliver scalable, trustworthy, and high-impact conversational experiences.

Conversational AI is a type of artificial intelligence that enables software to understand and respond to human language through text or voice. It combines technologies such as Natural Language Processing (NLP), Machine Learning (ML), and Large Language Models (LLMs) to create context-aware, multi-turn interactions that feel natural and goal-oriented.

Traditional chatbots rely on predefined rules and scripted flows. Conversational AI systems, on the other hand, understand user intent, manage context across multiple turns, and dynamically generate responses. Instead of matching keywords, they interpret meaning and adapt to different user scenarios.

Yes. ChatGPT is a conversational AI system powered by generative large language models. However, enterprise-grade conversational AI solutions typically go beyond a single model API. They include retrieval systems (RAG), orchestration layers, guardrails, workflow integrations, and compliance mechanisms to operate reliably at scale.

Generative AI focuses on creating new content such as text, code, or images. Conversational AI focuses on structured dialogue, multi-turn interactions, and task completion. Generative AI acts as the engine that produces language, while conversational AI is the experience layer that manages context, workflows, memory, and governance around that engine.

AI-native products depend entirely on AI to deliver their core value. If the AI fails, the product fails. This approach offers strong differentiation but carries higher operational risk. AI-enhanced products integrate AI to improve existing experiences. The system remains functional without AI, allowing teams to test impact incrementally with lower risk and greater control.

Senior Software Engineer at Cheesecake Labs, leading AI initiatives and building productivity-driven applications using Rust and TypeScript. She also heads the internal AI Guild, driving innovation across teams and projects.