Google I/O 2026: The Agentic Era is Here, and It’s a Builder’s Moment

Douglas da Silva | May 20, 2026

AI applications are undergoing a foundational transformation. Where we once relied on static, pipeline-driven AI Systems—like recommendation engines or classifiers—we’re now seeing a shift to AI Agents: dynamic, autonomous entities capable of perceiving, reasoning, and acting based on real-world context.

This shift mirrors changes in software architecture: from predictable workflows to autonomous orchestration loops. Let’s explore the architectural and implementation-level differences between these two paradigms, and what it means to build truly intelligent systems.

| AI Systems | AI Agents | |

| Purpose | Task-specific automation (e.g., chatbots, recommendations) | Autonomous problem-solving (e.g., scheduling, negotiation) to achieve goals |

| Autonomy | Low Requires explicit prompts/inputs | Low → High Makes decisions with a configurable amount of human input |

| Learning | Passive Improves via user feedback and retraining (e.g., A/B tests) | Active Self-improves through environmental interactions |

| Interaction | Question-Answer Retrieves info from specified data sources | Objective-Oriented Executes tasks (API calls, edits) |

| Use Cases | • Customer support bots • Recommendation engines | • Autonomous supply chain • Personal assistants |

| Example | Netflix recommendation algorithm | Amazon delivery route optimization |

Traditional AI systems are highly performant at specific tasks, but they’re static—they don’t change their behavior based on new context.

Agents, on the other hand, evolve with data, learn from feedback, and actively choose how to solve problems.

| Feature | AI Systems (Traditional ML / LLM Single-Pass) | AI Agents |

| Architecture Type | Pipeline or microservice | Modular, loop-based agent framework |

| State | Stateless | Stateful |

| Control Flow | Linear, manual | Dynamic, feedback-driven |

| Memory | None / input-bound context | Persistent, evolving memory (e.g. vector DBs) |

| Tool Use | Fixed functions or no integration | Adaptive tool use via APIs and plugins |

| Autonomy | Task-driven | Goal-driven |

| Integration | API endpoints or embedded services | Context-aware orchestration (MCP, tools) |

| Examples | Recommendation engines, fraud detection, translation | Research assistants, autonomous RAG systems, workflow bots |

Agents introduce a looped control architecture where reasoning is interleaved with perception and action.

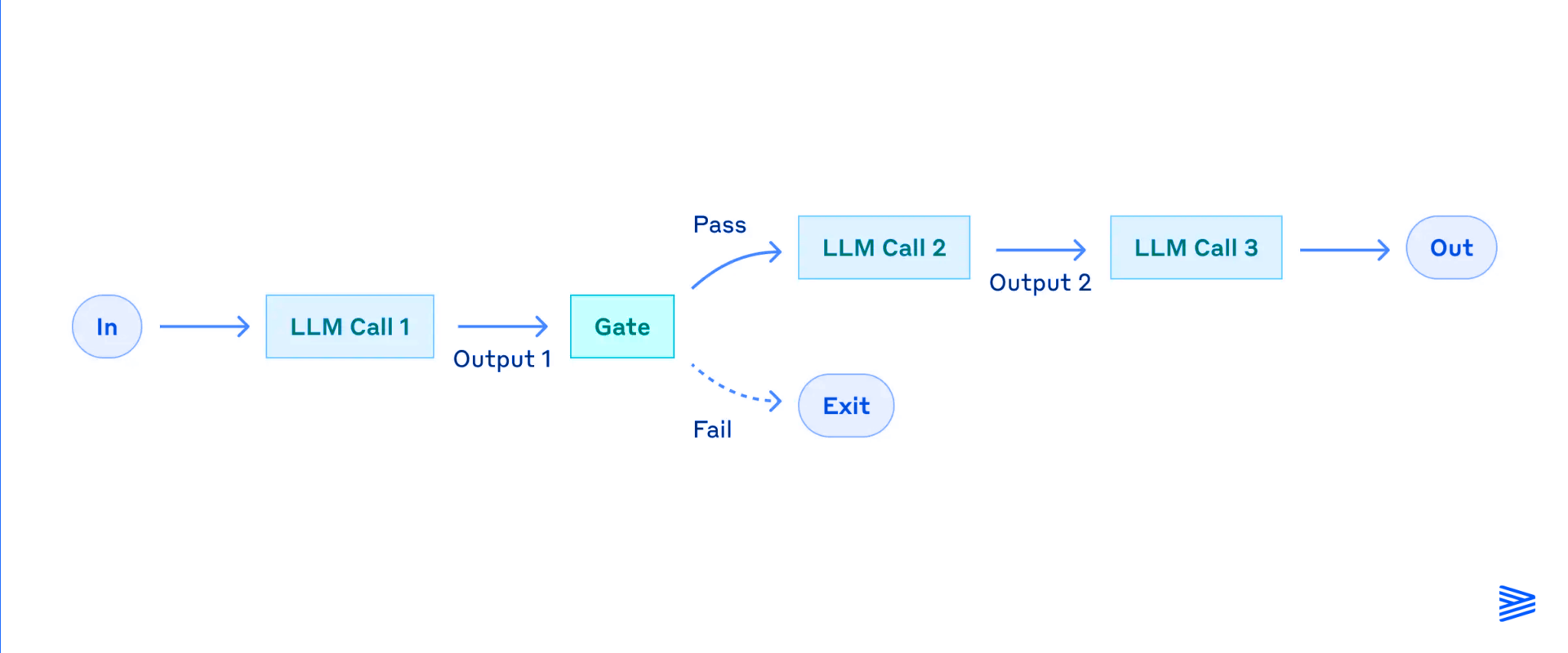

Decomposes a task into sequential LLM calls. Each step’s output feeds the next. Useful for step-by-step reasoning, programmatic checks, or validation stages.

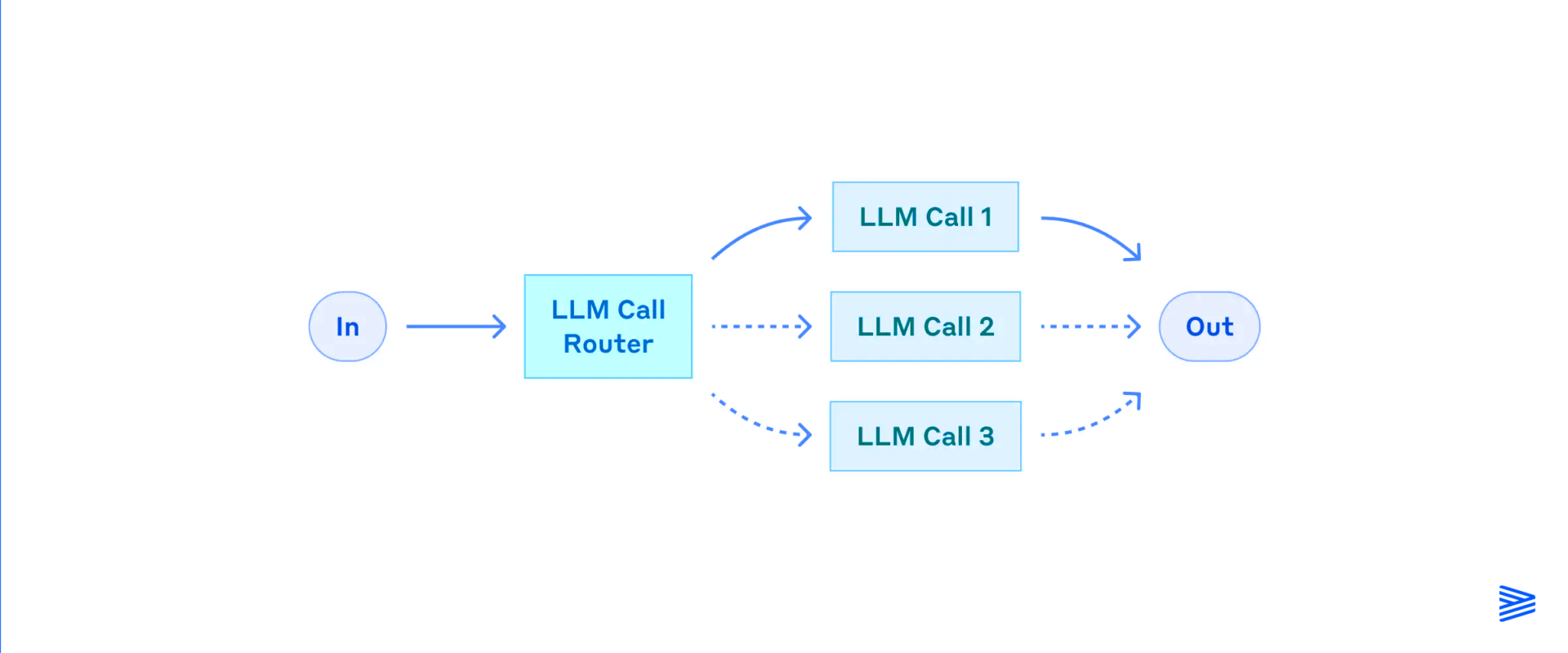

Classifies user input and routes it to specialized agents, prompts, or tools. Common in multi-skill agents (e.g., scheduling, research, support).

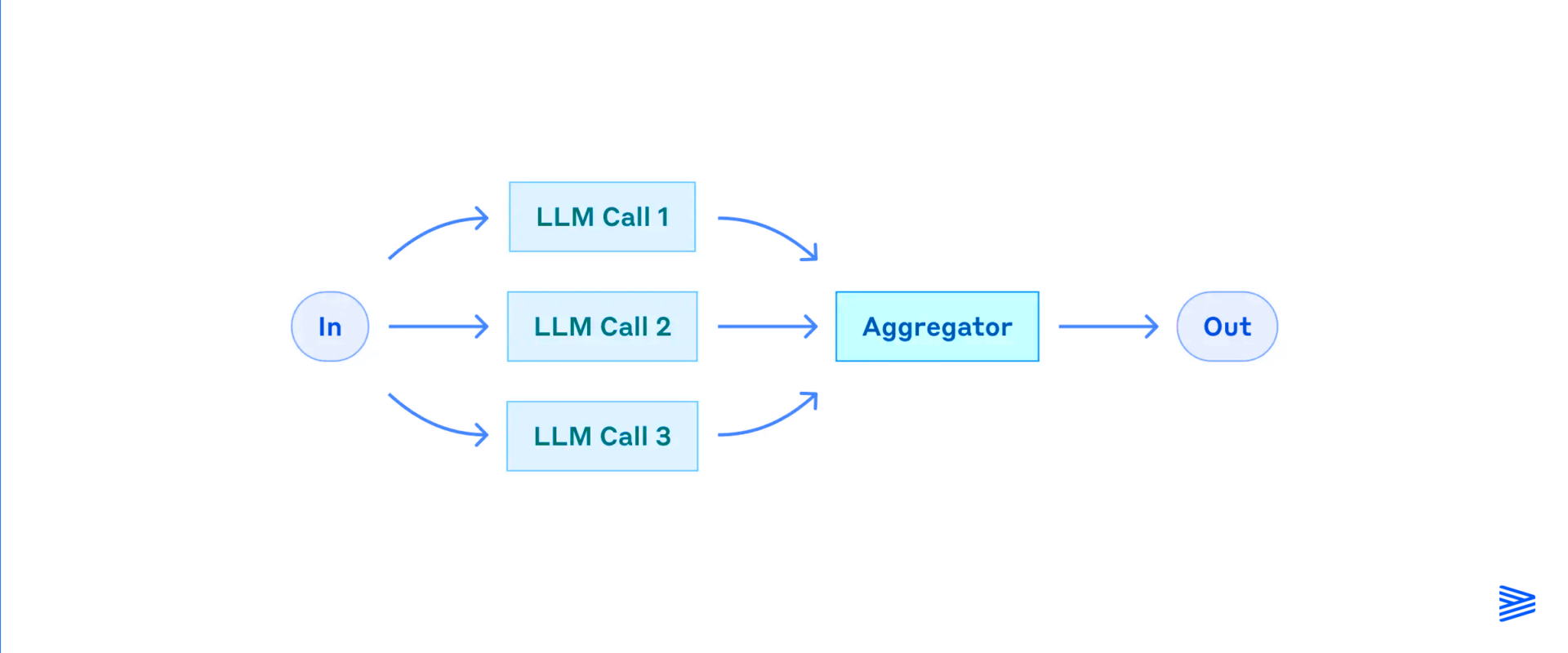

Executes tasks concurrently:

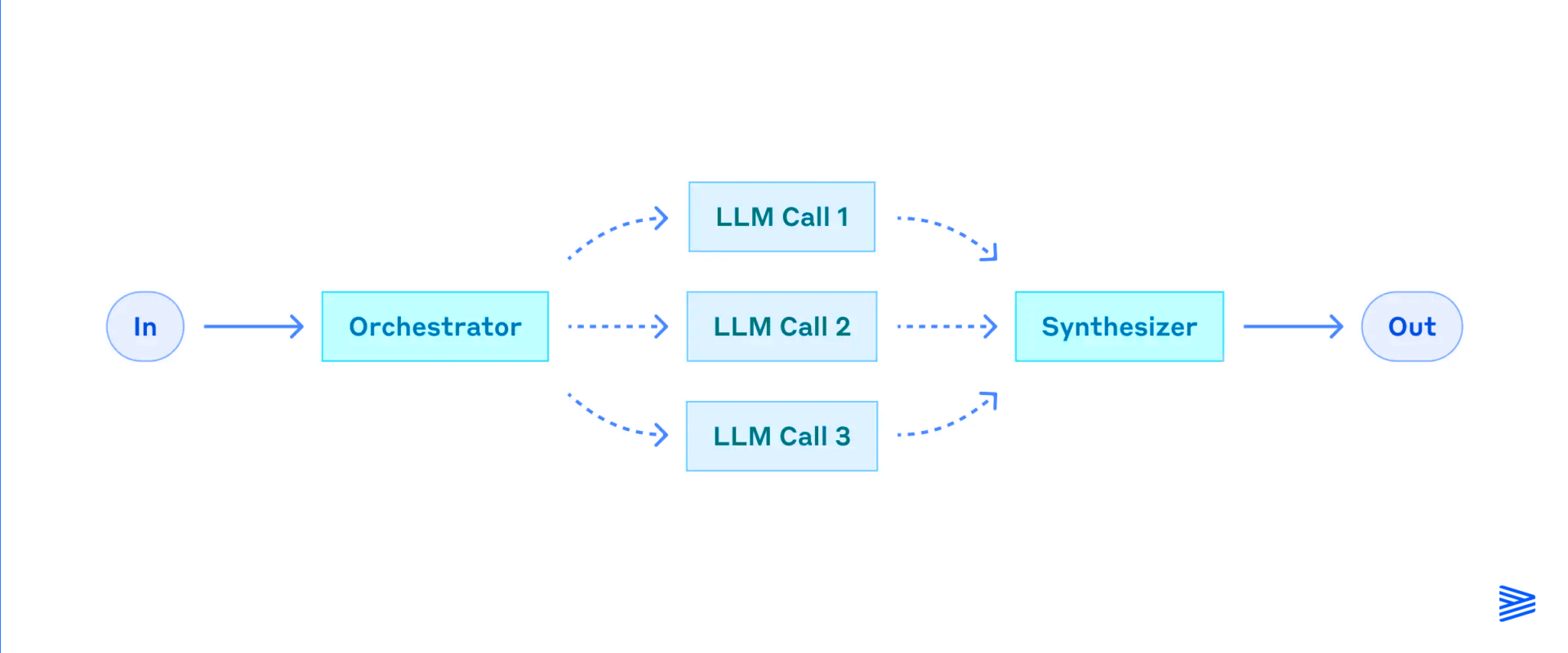

A central agent plans and delegates subtasks to sub-agents. Useful for complex tasks like report generation, planning, or multi-modal coordination.

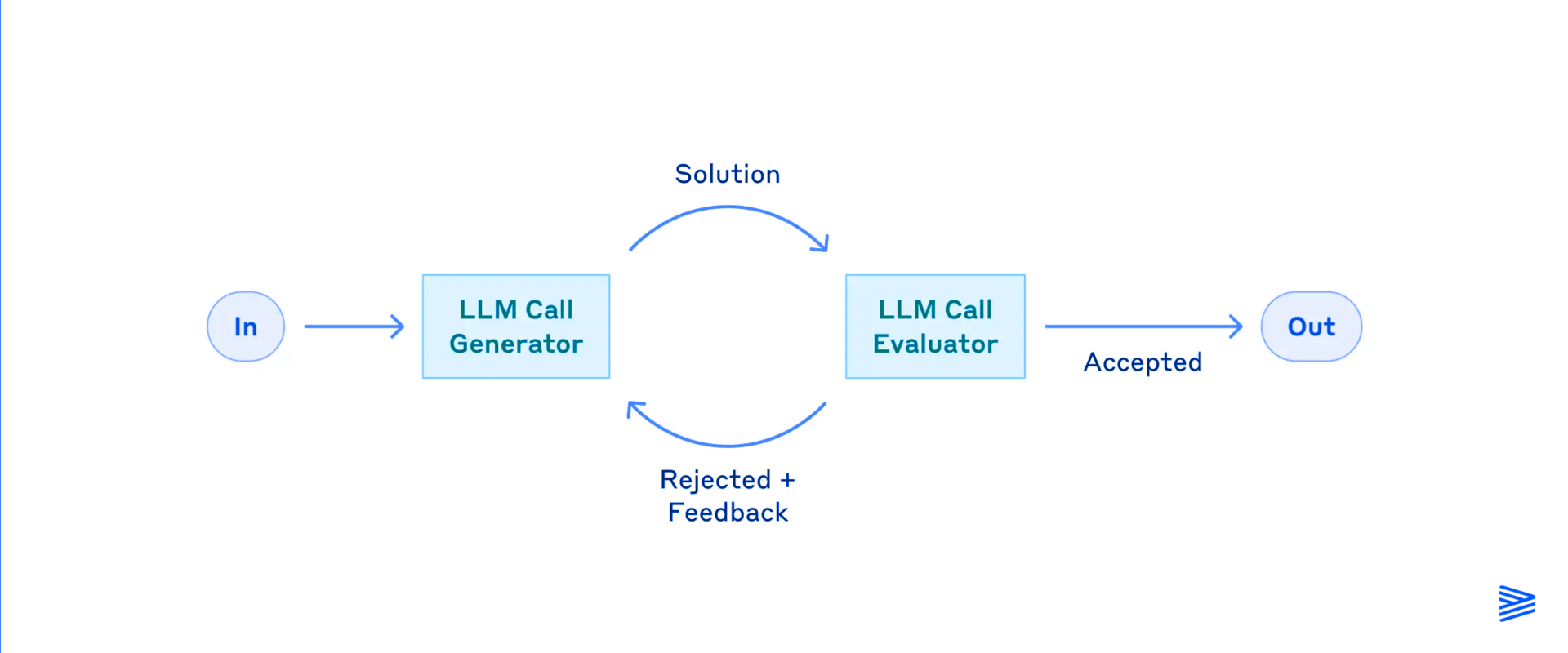

Pairs a generator agent with a reviewer agent. Output is iteratively improved using feedback. Common in research, ideation, or product copy workflows.

Challenge: How to persist and retrieve relevant context efficiently

Solution: Vector DBs (e.g., Pinecone, Weaviate) with metadata filtering; session managers or short-term caches

Challenge: Integrating with dozens of APIs is fragile and costly

Solution: MCP abstracts tools into interoperable “servers”; allows rapid scaling without glue code

Challenge: Agents can hallucinate, fail, or loop infinitely

Solution:

Challenge: Multi-step workflows are resource-intensive

Solution:

AI Agents are not simply “better” AI Systems—they’re a different species altogether. They bring autonomy, adaptability, and memory to intelligent systems. But they also demand careful architectural planning, modular workflows, and robust infrastructure.

As Model Context Protocols, vector databases, and multi-agent orchestration patterns mature, AI development will increasingly resemble the design of intelligent organizations—where software doesn’t just serve, but decides, acts, and evolves.

AI Agents: Evolution, Architecture, and Real-World Applications

Model Context Protocol (MCP): Landscape, Security Threats, and Future Research Directions

AI Agents vs. Other AI Systems: Definitions and Distinctions

AI Systems are task-specific, static, and pipeline-driven (e.g., recommendation engines or classifiers), while AI Agents are dynamic, autonomous entities capable of perceiving, reasoning, and acting based on real-world context. AI Systems rely on explicit prompts and passive learning, whereas AI Agents make goal-driven decisions, use persistent memory, and self-improve through environmental interactions.

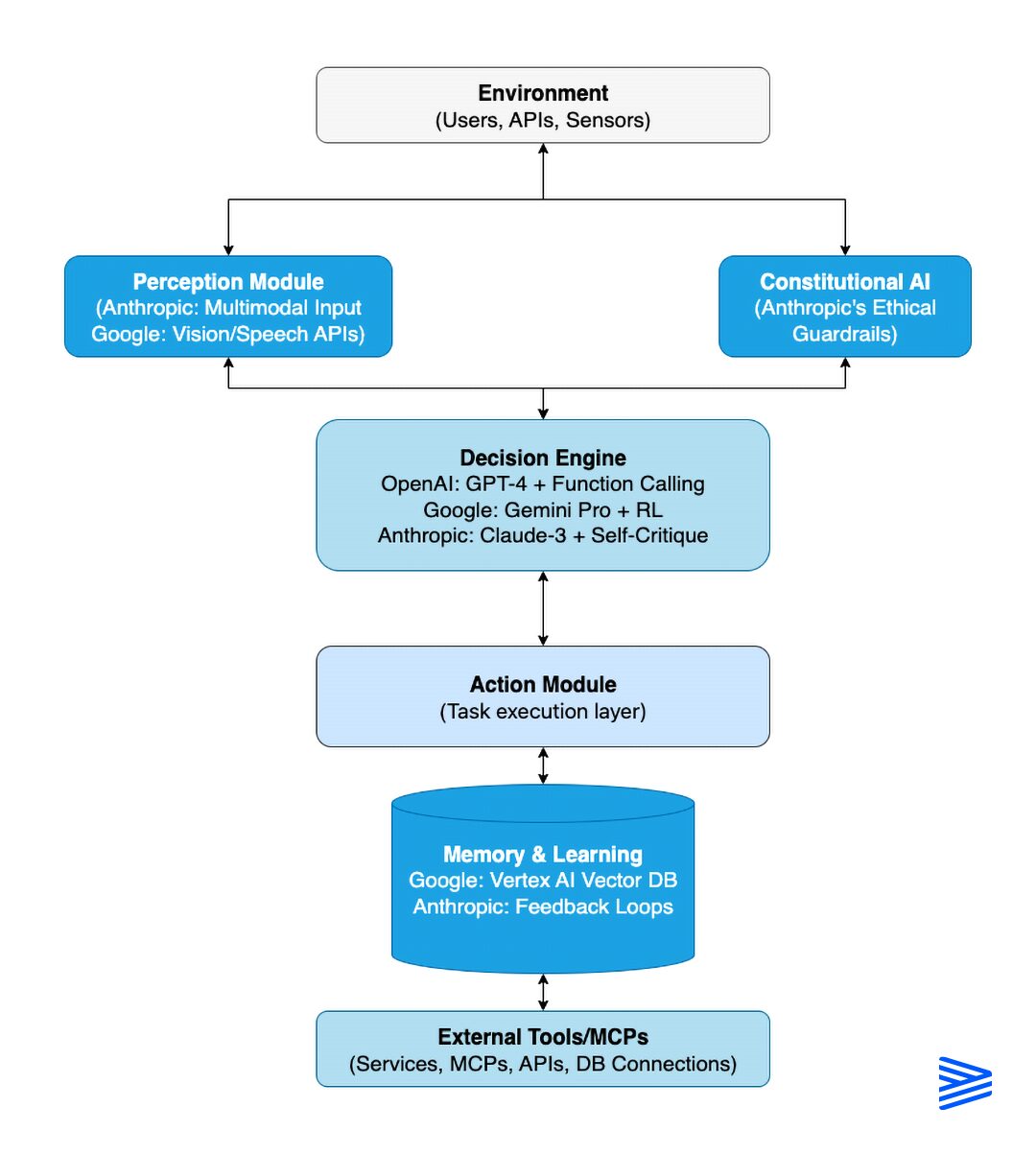

A modular AI Agent architecture includes: (1) Environment Interaction for real-time data gathering, (2) Multimodal Perception to convert raw signals into structured insights, (3) a Decision Engine typically powered by an LLM and enhanced with RAG and planning, (4) Action Execution through APIs and tools, (5) Memory & Learning using vector databases for long- and short-term context, and (6) an Integration Layer managed via MCP (Model Context Protocol).

Common workflow patterns include: Prompt Chaining (sequential LLM calls), Routing (classifying and directing input to specialized agents), Parallelization (sectioning tasks or voting across generations), Orchestrator-Worker Pattern (a central agent delegates to sub-agents), and Evaluator-Optimizer Loop (a generator agent paired with a reviewer for iterative improvement).

Key challenges include: state management (solved with vector DBs like Pinecone or Weaviate and session managers), tool integration (addressed via MCP abstraction), error handling and self-correction (using guardrails, redundancy, voting, and evaluator-agent feedback loops), and cost and latency optimization (mitigated through hybrid agents, caching, batching, and fine-tuning on narrow domains).

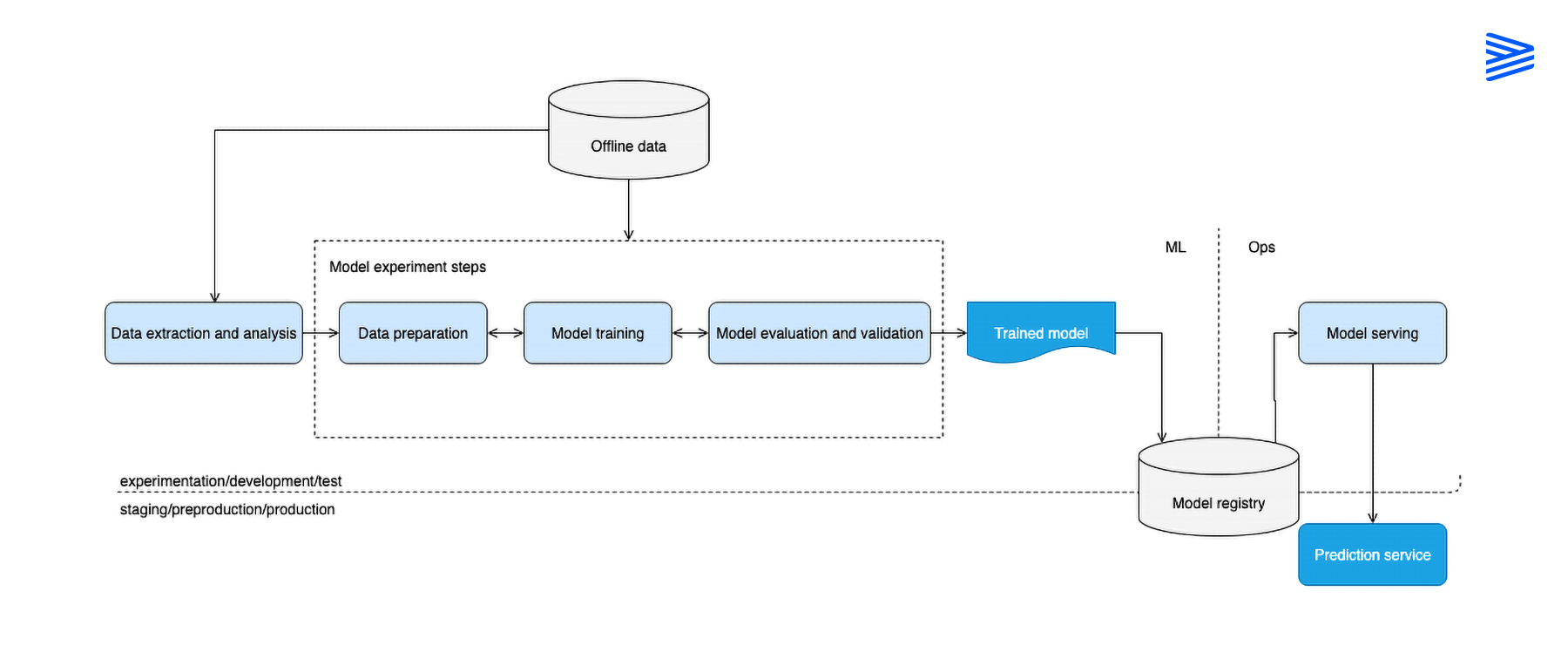

AI Systems use structured input processed by classic ML models (e.g., XGBoost, logistic regression), neural nets, or single-call LLMs, and output predictions or classifications deployed as REST APIs or batch pipelines. AI Agents, in contrast, use a looped control architecture that interleaves perception, reasoning, and action, with stateful, feedback-driven control flow and adaptive tool use.

Senior Software Engineer at Cheesecake Labs, leading AI initiatives and building productivity-driven applications using Rust and TypeScript. She also heads the internal AI Guild, driving innovation across teams and projects.